CUDA Rastrerizer

Monday, November 19, 2012

Sunday, November 4, 2012

More additions

The following have now been implemented:

Back Face Culling

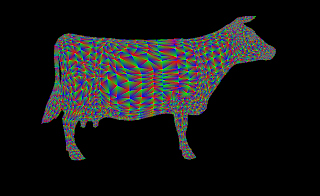

This is implemented as part of the rasterizer, which ignores the faces facing away from the viewer using the normal data. For flat shading of a scaled up, rotated version of the cow I get

the same fps with or without back face culling. Just over 2700 triangles were culled in this view.

Anti-aliasing

I performed antialiasing on each and every primitive. This worked fine for a single primitive, but on a model, I get lines at the junctions where the primitives meet. This was done in the vertex shader itself. The image is anti-aliased but artifacts are disturbing. This runs at 12 fps same as the normal case.

So I performed anti aliasing using higher resolution (4 times the normal resolution) in the rasterizer and performed shading on the higher resolution before writing to the frame buffer. I get a 0.5 to 1 fps with this method instead of 12 fps.

The smotthness can be clearly seen in the antialiased model. This was created using a 9*9 mask.

Z-Buffer Visual

I got the minimum and maximum values of the depth and shaded from 0.15 to 0.85 depending on depth from camera with 0.15 being the closest. This can be seen in the above picture.

Back Face Culling

This is implemented as part of the rasterizer, which ignores the faces facing away from the viewer using the normal data. For flat shading of a scaled up, rotated version of the cow I get

the same fps with or without back face culling. Just over 2700 triangles were culled in this view.

Anti-aliasing

I performed antialiasing on each and every primitive. This worked fine for a single primitive, but on a model, I get lines at the junctions where the primitives meet. This was done in the vertex shader itself. The image is anti-aliased but artifacts are disturbing. This runs at 12 fps same as the normal case.

So I performed anti aliasing using higher resolution (4 times the normal resolution) in the rasterizer and performed shading on the higher resolution before writing to the frame buffer. I get a 0.5 to 1 fps with this method instead of 12 fps.

Antialiasing a Primitive

Model with primitive antialiasing

You can clearly see the lines where the 2 triangles meet. The depth test put only one color there, which would be lighter due to antiaiasing and thus causing these lines to appear. (Though it look like I have enabled Mesh display :))

Antialised Model

Comparison: Left - Non antialiased, Right - Antialiased

Z-Buffer Visual

I got the minimum and maximum values of the depth and shaded from 0.15 to 0.85 depending on depth from camera with 0.15 being the closest. This can be seen in the above picture.

That's it for now for the rasterizer. Sadly have to get back to other work.

Saturday, November 3, 2012

Shading

Started work on the fragment shader. Extended flat shading to diffuse as well as specular shading. Included multiple directional light sources with light color. Also, did some stream compaction to save on threads during fragment shading - removed all useless pixels which were not adding to the data in the framebuffer. The number of threads reduced from 640,000 to 63,000 for the images below.

Diffuse Shading

Specular+Diffuse Shading

Multiple lights with different colors

Initial Output

The problem with the rasterizer is that you can't see any output until you complete the rasteriation stage, which means finishing up all the previous stages. So I started with a simple triangle and proceeded from there checking it though each of the stages.

Stuff I implemented so far:

Here each of the vertices are given Red, Green and Blue colors.

Stuff I implemented so far:

- Vertex Shader: Here is converted the vertices into the Projection view, which is nothing but bring the model into eye coordinates and then transforming the truncated pyramid of projection frustrum into a cube. This step of converting it into a cube skews the object and provides a perspective view.

- Primitive Assembly: Here, we assemble each of the individual vertices into a primitive (in my case a triangle). The colors and normals associated with the vertex are also transferred into the primitive.

- Rasterizer: This is the meat of the process, which converts the pixels into fragments. A single pixel becomes a fragment when it is actually eligible to be displayed. Following steps need to be performed:

- Project the triangle from the perspective space onto the screen and find where exactly on the screen the vertices lie.

- Start a scanline algorithm to actually determine which pixels lie within that primitive.

- Once you are sure that the pixel lies within the primitive, project it back into the projection space using barycentric coordinates. This new coordinate's z-value can be used to deterine the depth at which the actual point on the primitive lies. If there are other pixels which lie in front of it, this pixel will be obscured and will not become a fragment. So we perform this depth test for each of the points that we get from the scanline algorithm.

- The colors can also be interpolated in a similar manner using Barycentric coordinates.

- The problem with updating the depth buffer is that all the primitives are running in parallel and there maybe 2 or more primitives at the same pixel location trying to put their value into the depth buffer. This has to be resolved so that only one thread access an index in the depth buffer at one time. I achieved this using atomics in CUDA. I sort of lock the index in the depth buffer when it is being updated, so that no one else can modify it.

- At the end of this stage, you get all the fragments which are ready to be displayed.

- Fragment Shader: Currently I am just wrting out the values of the interpolated color values from the depth buffer. Haven't done any particular shading here yet, so its just flat shading.

Scaled up cow

Flat Shading

Here each of the vertices are given Red, Green and Blue colors.

Friday, November 2, 2012

Introduction

Creating a Rasterizer by yourself seems to be so cool. This is the latest project in the GPU course offered in UPenn. Again as usual some base code has been provided to us and we are required to crete different stages in the Graphics pipeline, including but not restricted to "The Vertex Shader", "Primitive Assembly", "The Rasterizer itself" and "The Fragment Shader".

In this project, we are given code for:

* A library for loading/reading standard Alias/Wavefront .obj format mesh files and converting them to OpenGL style VBOs/IBOs

* A suggested order of kernels with which to implement the graphics pipeline

* Working code for CUDA-GL interop

We have to implement the following stages of the graphics pipeline and features:

* Vertex Shading

* Primitive Assembly with support for triangle VBOs/IBOs

* Perspective Transformation

* Rasterization through either a scanline or a tiled approach

* Fragment Shading

* A depth buffer for storing and depth testing fragments

* Fragment to framebuffer writing

* A simple lighting/shading scheme, such as Lambert or Blinn-Phong, implemented in the fragment shader

We are also required to implement at least 3 of the following features:

* Additional pipeline stages. Each one of these stages can count as 1 feature:

* Geometry shader

* Transformation feedback

* Back-face culling

* Scissor test

* Stencil test

* Blending

* Correct color interpretation between points on a primitive

* Texture mapping WITH texture filtering and perspective correct texture coordinates

* Support for additional primitices. Each one of these can count as HALF of a feature.

* Lines

* Line strips

* Triangle fans

* Triangle strips

* Points

* Anti-aliasing

* Order-independent translucency using a k-buffer

* MOUSE BASED interactive camera support. Interactive camera support based only on the keyboard is not acceptable for this feature.

In this project, we are given code for:

* A library for loading/reading standard Alias/Wavefront .obj format mesh files and converting them to OpenGL style VBOs/IBOs

* A suggested order of kernels with which to implement the graphics pipeline

* Working code for CUDA-GL interop

We have to implement the following stages of the graphics pipeline and features:

* Vertex Shading

* Primitive Assembly with support for triangle VBOs/IBOs

* Perspective Transformation

* Rasterization through either a scanline or a tiled approach

* Fragment Shading

* A depth buffer for storing and depth testing fragments

* Fragment to framebuffer writing

* A simple lighting/shading scheme, such as Lambert or Blinn-Phong, implemented in the fragment shader

We are also required to implement at least 3 of the following features:

* Additional pipeline stages. Each one of these stages can count as 1 feature:

* Geometry shader

* Transformation feedback

* Back-face culling

* Scissor test

* Stencil test

* Blending

* Correct color interpretation between points on a primitive

* Texture mapping WITH texture filtering and perspective correct texture coordinates

* Support for additional primitices. Each one of these can count as HALF of a feature.

* Lines

* Line strips

* Triangle fans

* Triangle strips

* Points

* Anti-aliasing

* Order-independent translucency using a k-buffer

* MOUSE BASED interactive camera support. Interactive camera support based only on the keyboard is not acceptable for this feature.

Subscribe to:

Posts (Atom)